The Research

Valid and reliable assessments

All AI-powered test scores are grounded in extensive research, providing a reliable basis for evaluating the test-taker's language proficiency.

Contact us »Rooted in sound research

Our assessment evaluates a test-taker’s English language skills in real-life situations, especially in professional and academic contexts where English communication is essential.

To achieve this, the AI-powered test assesses the accuracy, variety, and clarity of spoken and written English, covering both linguistic and some pragmatic aspects.

Our adaptive listening and reading tests are based on authentic audio and text passages.

The test is structured to enable the use of scores for evaluating the test-taker's language proficiency. All score interpretations, as well as the tests themselves, are built on a strong theoretical foundation.

Concurrent Validity of the Speaknow Assessment – A Research Study

Background

The Speaknow Assessment is a computerized, adaptive test of receptive and productive language ability. It provides scores of overall CEFR level, and a composite score made up of the CEFR scores of its different scored parameters (Vocabulary, Grammar, Phonology, Fluency, and Cohesion) from 20-120. One of its purposes is for higher educational admissions, and placement into college or preparatory English language programs. As such, it is important to ascertain whether the test is valid for this purpose. One of the ways to measure validity is concurrent validity. That is, how well does the test do what other, similar tests do.

In Israel, the commonly used English language exams for admissions and placement are the Amir, Amiram, and Psychometric tests. All three of the tests measure receptive English proficiency, specifically for academic contexts. The Amir and Psychometric tests are both paper and pencil tests. The Amiram test is a computerized, adaptive version of the Amir test. The Psychometric test also has a computerized version. All three tests measure English proficiency through multiple choice questions, with three questions types. The question types on all three tests are sentence completion, restatement, and reading comprehension. As such, these tests use reading comprehension and vocabulary as measures of English proficiency. All three tests are scored on a scale of 50-150. All three tests are produced by the Israeli National Institute of Testing and Evaluation (NITE) and all three tests are highly correlated with each other (NITE, 2021).

Although the Speaknow assessment is primarily a test of productive language ability (the ability to produce language), and the Amir, Amiram, and Psychometric exams test receptive language ability (the ability to understand language), these proficiencies are related. Although most people tend to have stronger receptive than productive skills, the two types of proficiency are correlated. Accordingly, we would expect that students who are stronger in production are also the students who receive the higher scores in reception. Accordingly, one would expect that if a test is valid, the ranking of students by one test would be similar to that of another, established test. This study examines the relationship of the Speaknow Assessment of Spoken English with the Amir, Amiram, and Psychometric tests.

Methodology

Students applying to an English program at a college in Israel (n=51) took the Speaknow Assessment as part of their admissions process. The Amir/Amiram/Psychometric scores were compiled by the college and reported to Speaknow with identifying information removed.

Results

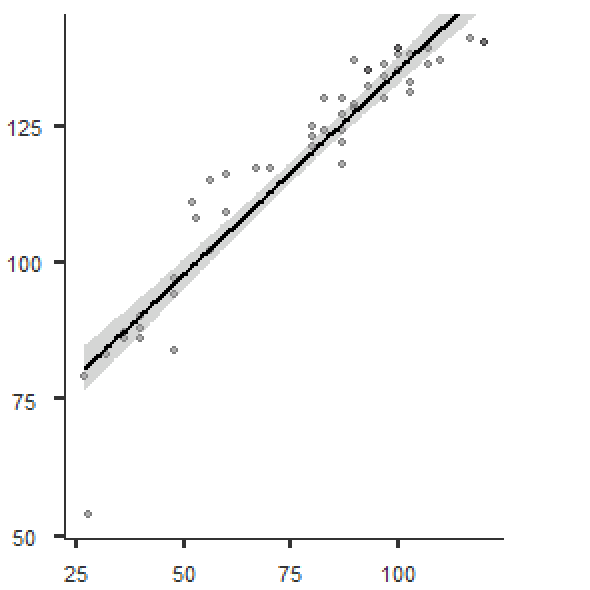

The scores of the two tests were compared with each other. The Amir/Amiram/Psychometric tests are scored on a scale of 50-150. The Speaknow assessment is scored on an overall score of 20-120 and each test also receives and overall CEFR score (A1, A2, B1, B2, C1, C2) with A1 the lowest and C2 the highest. Pearson and Spearman correlations were used to test the relationships between the two tests. Pearson correlations were used to compare the overall scores of both tests, because both types of scores are continuous. To compare the Amir/Amiram/Psychometric scores and the CEFR level obtained by the Speaknow Assessment, Spearman correlations were used, because the CEFR levels are ordinal and scalar.

Relationship between the Speaknow Overall Score and the Amir/Amiram/Psychometric score

Figure 1 Relationship between Speaknow Overall Score and Amir/Amiram/Psychometric Score

Figure 1 Relationship between Speaknow Overall Score and Amir/Amiram/Psychometric Score